As data storage and computing resources have increasingly moved into the cloud over the past decade, the demand on data centers for storage and data transfer capacity has increased dramatically (ref. 1). Stored data needs to be accessed and transferred constantly to and from these datacenters as well as within the data center, and this has placed an exponentially increased load on interconnects from the backplane to backplane of the data servers in the datacenter. A single large data center can require hundreds of thousands of interconnects.

Key factors that are driving higher capacity data center Interconnect bandwidth include:

• Gigabit Ethernet Growth - The growth of 10 Gigabit Ethernet (GE), 25 GE and 40 GE network adapters and more recently 100 GE and soon 400GE connections (Ref. 4)

• Cloud IT: A single request can trigger multiple data exchanges between servers in one data center, as well as servers in different data centers.

• New storage technology: Flash memory, solid-state drive (SSD) storage, software-defined storage enhances the attractiveness of cloud storage

• Continuous data availability and mobility: Distributing virtual computing and storage resources across many physical devices

• Dynamic allocation of resources: Dynamic allocation of server, storage and network resources for resource sharing

Interconnects in action

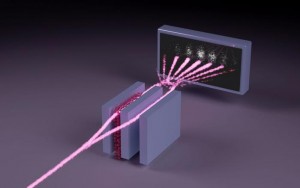

As seen below in the diagram of the typical Clos topology (Fig. 1) used in many of the largest datacenters (Ref. 1) a massive number of interconnects are needed to maintain efficient communications throughout the thousands of servers in individual data centers and between other on campus datacenters.

Interconnect Clos topology – This provides a more direct interconnection between Top of Rack (TOR) switches and all other servers, however it also results in a large number of interconnects being required. Every Leaf switch in the Leaf Spine architecture connects to every switch in the network fabric. The spine switches have the same level of connection to the leaf switches as the leaf switches to the top of rack switches.

As the data rates at the backplane moved to beyond the 10GB/s rate to 25GB/s and onwards to 800 GB/s the copper connections traditionally used for connection of backplane to top of rack (TOR) switch and TOR to Leaf switch have migrated to optical interconnects to accommodate the higher data rate required (Ref. 2) . This migration means a need to add more optical fibres in place of copper connections and more capable optoelectronic transceiver components that are cost effective, compact and energy efficient to serve these needs. This also drives the need for new technology and designs for both the active and passive components to meet these demands since traditional transceiver components used at these data rates in long haul systems are generally not appropriate for use in the datacenter since they are configured for longer distance interconnect (higher costs), higher power consumption and generally have a larger form factor.

Optical interconnect bandwidth enhancement using Single Mode fiber (SMF), Multimode fiber (MMF), coherent digital optical transmission, Dense wavelength division multiplexing (DWDM), multiple spatial modes, increased data rates, and other techniques will all come into use as each of the optical datacenter interconnects start to require data transfer rates approaching those of the long-haul fiber connections. Energy consumption is a critical consideration and the correct choice of optical interconnect can minimize this and the associated heat dissipation (Ref. 3) and can influence the optical design and optical filters required.

Optical filters in the data center

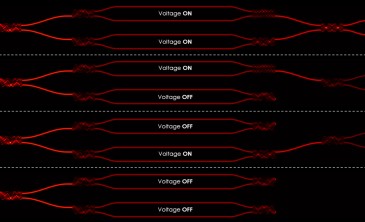

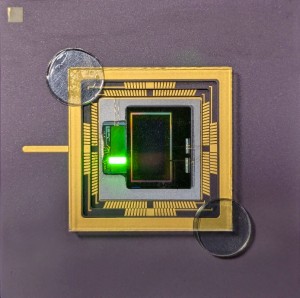

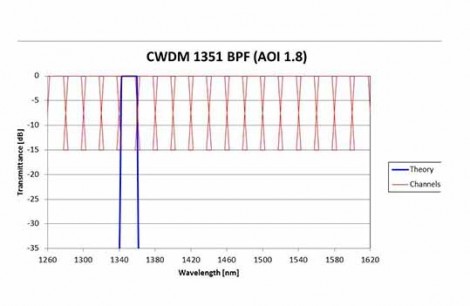

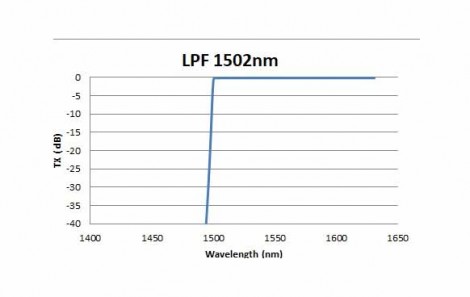

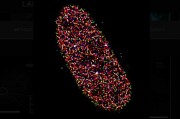

The optical transceivers used at each end of the fiber interconnect will often use optical filters to manage the different wavelength channels used in WDM, CWDM and DWDM and other multi wavelength configurations. The optical filters used in these shorter interconnect applications utilize the same the base filter coating technology as filters used in long haul and Metro applications however the optical design, filter size and thickness are adjusted to meet the unique requirements of these ultra compact products. As always, maintaining the optical performance required at the lowest possible cost is critical and a group of filter solutions separate from traditional telecom offerings is now available to address these needs. Custom filter solutions for interconnect applications are the norm and filter manufacturers are quickly moving to meet these needs. The filters used in these products include the typical single wavelength bandpass and edgepass used in single channel WDM systems, (CWDM bandpass (Figure 2) and DWDM edge filter (Figure 3)). Etalons or other optical filters may also be used in the integrated laser assemblies (ITLA) sources used in these systems.

Figure 2: Typical CWDM bandpass filter

Figure 3: Typical DWDM edge pass filter

The advent of data centers for cloud storage and distributed computing is driving the need for ever higher data transmission rates internal to the datacenter. This in turn is expanding the role and capability of optical interconnects and drives the exploding demand for the specialized optical filters and optoelectronics used in these centers. The future for all those enabling the cloud is very bright.

References

- Zhou, X., Optical Fiber Technology (2017), http://dx.doi.org/10.1016/j.yofte.2017.10.002

- Chongjin Xie 978-1-5090-5016-1/17- 2017 IEEE Optical Interconnects Conference (OI) Pages: 37 – 38

- Joseph M. Kahn and David A. B. Miller, NATURE PHOTONICS | VOL 11 | JANUARY 2017 | www.nature.com/naturephotonics.

- Qixiang Cheng, Meisam Bahadori, Madeleine Glick, Sebastien Rumley, AND Keren Bergmen, Optica, Vol. 5, No. 11 / November 2018 / Optica, p 1354-1370

Written by Robert Bruce, VP Business Operations, Iridian Spectral Technologies

Back to Features

Back to Features