Pattern recognition is a software technique that is widely deployed in industrial vision systems to search and locate objects in images. The approach involves teaching a system the characteristics of a known object and then finding a match between those characteristics with found in images captured by a machine vision camera.

Over the past decade, many different approaches have been developed to identify objects in images, each with its own advantages and disadvantages. One of the more common approaches used is normalised grey scale correlation. Here, a template is created of the object that is to be identified and the template compared with image captured by the camera. A correlation is then generated to determine how well the image matches the template. In order to accommodate objects that are rotated, many software packages that use this technique enable a user to set tolerance ranges for rotation and scale enabling objects to be identified regardless of their orientation.

Geometric, or step-based searching, is an alternative technique in which the vision system software searches for edges in the image. A user trains the system by presenting it with a set of relevant edges to be detected, after which the system creates a model based on the selection. The system software then searches the captured image to find a match with the model. This approach also has the advantage that it can be used to identify images of parts which may be scaled or rotated.

Another type of learning algorithm that is often deployed where an object to be found in an image can take on a finite set of values is a decision tree based search. Here, the features of the objects to be identified in the image are extracted from the image during the training stage and are stored in the system. Grouping such objects in a tree enables the system to search for the objects in the captured image relatively quickly.

Image classification

More recently, a new class of software products have emerged that classify objects using Support Vector Machines (SVMs). These learning methods recognize patterns in images and create a model of the images by representing the features found in them as points in space. New images captured by the system are then mapped into the same space and predicted to belong to a specific category based on where they fall. Although such systems require a high level for training, support vector machines can be especially useful when complex images need to be classified.

In a recent research project undertaken at Stemmer Imaging, however, engineers worked to develop a new approach to pattern recognition and machine learning that would provide systems developers with certain advantages that these older techniques were lacking.

Primarily, they sought to develop a new software tool that would enable three dimensional objects to be identified from an image notwithstanding the orientation and pose of the objects in the image. They also wanted the software to be robust towards variations in lighting conditions and able to be able to identify objects in an image even if they did not have distinctive image structures. Lastly, they also wanted the processing time of the new software to be as low as possible.

Initially, the engineers examined the possibility of image category classification using a technique called the “Bag of Visual Words”.

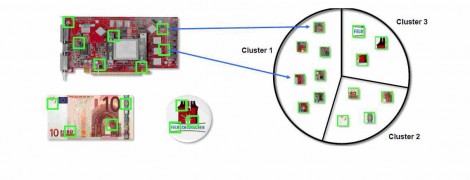

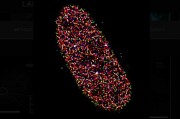

In the “Bag of Visual Words” approach, a "vocabulary" of image features are created. The features from all the images are extracted using a scale-invariant feature transform (or SIFT) algorithm which extracts feature points in corner-like structures of the image. The visual vocabulary is then constructed by reducing the number of features through quantization of feature space. The visual word occurrences in an image are then counted, and a histogram is produced that becomes a reduced representation of the image. The histogram forms the basis for training a classifier and for image classification.

Because images do not actually contain words, a "vocabulary" of image features is first created. Next, the features from all the images are extracted using a scale-invariant feature transform (or SIFT) algorithm which extracts feature points in corner-like structures of the image.

The visual vocabulary is then constructed by reducing the number of features through quantization of feature space using K-means clustering. The visual word occurrences in an image are then counted, and a histogram is produced that becomes a reduced representation of the image. The histogram forms the basis for training a classifier and for the actual image classification. The newly trained classifier can then be used to categorize new images.

While the engineers discovered that the approach produced robust search results for images in a variety of poses, and was indeed robust towards variations in lighting, the disadvantage of the technique is that it only works to identify objects with distinctive image structures, and the processing time taken is high. What is more, the use of the SIFT algorithm itself incurs a license fee and the alternatives are more imprecise or insignificantly faster. Hence the need arose to search for an alternative approach.

New approach

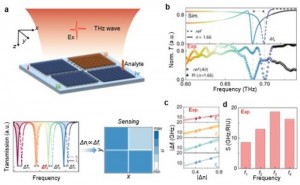

Unlike other software packages, the new software approach does not rely on the extraction of features that describe objects in an image, but instead relies on a multi resolution analysis technique to extract directional details in the image. This is the data that is then used by a Tikhonov regularization classifier -- a classifier not dissimilar to a Support Vector Machine -- to classify objects in an image.

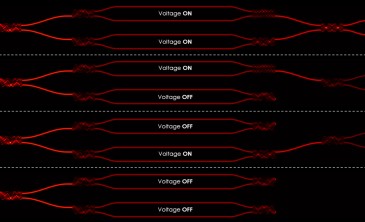

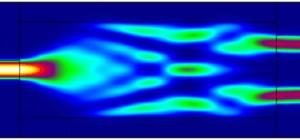

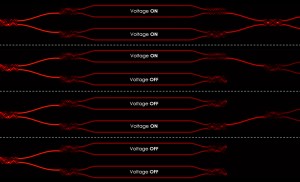

In the Polimago software, a multi-resolution analysis technique segments different parts of an image. By decomposing those parts of the image using a series of filters, an image transform can then reveal different characteristics of the original image through extracting directional details. During the teaching process, the software automatically creates thousands of views of specific areas of an image. The algorithm learns the versatility of a given pattern and can detect a training image reliably from a number of different angles. The multidimensional data associated with specific areas of an image is then classified using Tikhonov regularization, a technique not dissimilar to that used in Support Vector Machines.

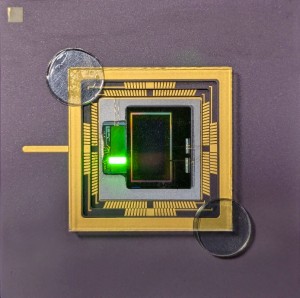

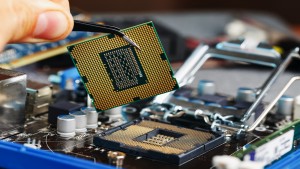

During the training process, an object (such as a chip on a PCB) or an object class (such as a series of numbers) are presented to the software. The software then automatically creates thousands of training images, or randomly generated views of the object (or object class). Because the algorithm learns the versatility of a given pattern, it can then detect an object reliably from a new image even if the image is presented to a system at a number of different angles.

Having developed the software, the engineers at Stemmer compared the effectiveness of the Bag of Visual Words classifier with the new search classifier. They discovered some similarities between the two approaches. Notably, both the Bag of Visual Words approach and the new approach both demonstrated invariance against geometric transformations and variations in lighting.

However, while the Bag of Visual Words approach relied on the presence of corner structures in the image in order to extract features from the image, the new search classifier could extract features even from arbitrary structures that were not corner based.

Like the Bag of Visual Words approach, the new classifier can also recognize multiple objects in an image. But the new approach is faster, since the search time no longer depends on the number of objects in a classifier. The new search classifier can also be trained from negative samples and the can then learn the differences between the positive and negative variations.

To determine the precision that could be achieved with the new classifier, it was compared against the company’s shape finder software which performs a geometrical search on an image. In doing so, they discovered that the classifier had a precision of 0.1 of a pixel in positioning, and 0.1 degree in orientation.

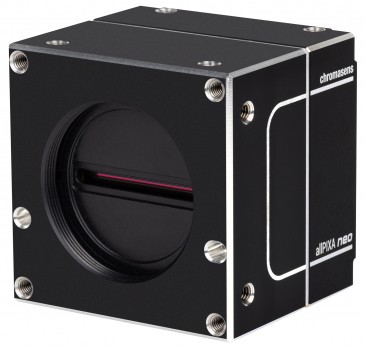

A novel pattern matching tool called CVB Polimago can be used to determine the position, pose and rotation of the items to be picked in a vision-enabled robotic pick and place application. CVB Polimago is a module of Stemmer Imaging’s Common Vision Blox hardware-independent machine vision library. It is different from other pattern matching methods because it generates artificial views of the model during the training phase to simulate various positions of a component in real life.

Next, to discover how effective the software would be in a real world environment, Stemmer Imaging approached one of its customers to trial the software in a pick and place application in a PTB-certified robotic cell. After training a vision-based system to guide the robot pick and place machine, it was discovered that the new software could reliably identify parts with a five degree measurement accuracy even if the parts were tilted by sixty degrees. The demonstration proved that the pose estimation was accurate enough to guide the robot to pick up a component.

Currently, the new software -- named Polimago -- has been integrated into the latest release of the company’s Common Vision Blox (CVB) software toolbox and is delivered with a variety of application and programming examples. However, the company is not resting on its laurels. Future upgrades to the software will see the Stemmer Imaging staff work to speed-up of the classifier‘s learning stage as well as port the software to ARM and Linux platforms.

Written by Dave Wilson, Senior Editor, Novus Light Technologies Today

Back to Features

Back to Features