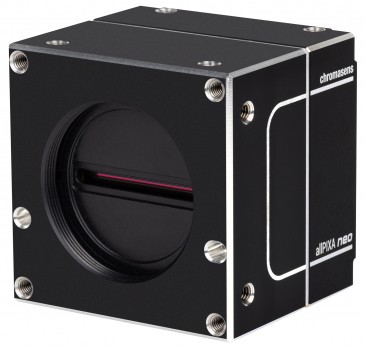

The evolution of industrial camera technology and image sensors used for machine vision applications—such as the inspection of flat panel displays, printed circuit boards (PCBs), and semiconductors as well as warehouse logistics, intelligent transportation systems, crop monitoring, and digital pathology—has placed new demands on cameras and image sensors. Chief among these is the need to balance the drive for higher resolution and speed with lower power consumption and data bandwidth. And in some cases, there’s a push for miniaturization as well.

On the outside, a camera is housing with mounting features and optics. While this is important for the user, there are substantial challenges on the inside that affect performance, capability and power consumption. Hardware, like image sensors and processor, as well as software play key roles here.

Drawing from what we know, what changes will we see in cameras, processors, image sensors and processing over the next decade? And how will they affect our quality of life?

Image Performance

When you’re choosing a new car, one size does not fit all. The same can be said of image sensors.

It’s true that ever-larger and more powerful image sensors are very attractive for certain classes of high-performance vision applications. In these cases, the size, power consumption, and price of the image sensors used in these applications aren’t as important as performance. Inspection of flat panel displays is a good example. Some flat-panel manufacturers are now searching for sub-micron defects in premium-quality displays. That’s literally small enough to detect bacteria on the display.

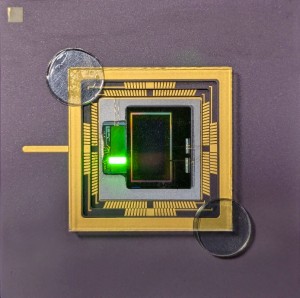

Ground- and space-based astronomy applications demand even higher performance. Researchers at the U.S. Department of Energy’s SLAC National Accelerator Laboratory demonstrated a 3 Gigapixel imaging solution—equivalent to hundreds of today’s cameras—using an array of several smaller image sensors. According to SLAC, the images’ “resolution is so high that you could see a golf ball from about 15 miles away”. We can infer from this remarkable achievement that the future of what the world’s research labs can achieve is almost limitless.

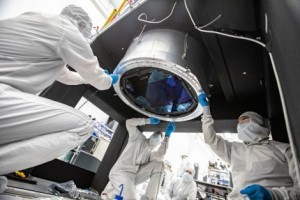

Members of the Large Synoptic Survey Telescope LSST camera team prepare for the installation of the L3 lens onto the focal plane of the camera, a circular array of CCD sensors capable of 3.2 megapixel images. Image via Jacqueline Ramseyer Orrell/SLAC National Accelerator Laboratory

But no matter how high the resolution, we can see that well-established 2D imaging is starting to run out of capability. Advanced optical inspection systems don’t actually demand higher speed or more data. They demand more and only useful information.

Quest for More Information

A handful of trends around the ever-increasing amount of information required for each single pixel are gaining ground.

3D Image Capture

3D image capture provides an extra dimension offering more granularity, detail and detection capability. Applications like battery inspection or, once again, TV/laptop/phone screen fabrication are driving optical inspection sensors to gather even more information. In this case, even finding 2D defects at sub-micron resolution is becoming insufficient, compelling us to figure out their height and possibly even their shape to determine if images are affected by cleanable dust, hard particles or needles among other particulate matter.

Application developers are diligently employing colors, angles and different imaging modalities—as 3D or polarization, which is yet another dimension of light—to meet the needs of their customers. In turn, camera manufacturers are working hard to deliver the tools for the trade.

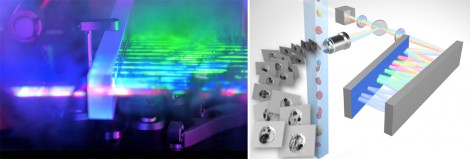

Hyperspectral Imaging

Hyperspectral Imaging is another rapidly strengthening trend. Like most remote sensing techniques, hyperspectral imaging makes use of the fact that all objects, due to their electronic structures (for the visible spectrum) and molecular structures (for the SWIR/MWIR spectrum) possess a unique spectral fingerprint, based on the wavelengths of visible and invisible light that they absorb and reflect. This reveals a multitude of details not visible with normal color imaging systems (e.g., human or camera). The ability to “see” chemistry in materials has wide applications in mineral, gas and oil exploration, in astronomy, and in monitoring flood plains and wetlands. High spectral resolution, separation and speed are useful in wafer inspection, metrology and health sciences.

In these markets, sensor and camera manufacturers are pushing boundaries in speed, cost, resolution and capability. We’re broadening our technology within the spectral range, spanning energy detection which starts with X-ray and ends in highly accurate thermal imaging, thereby enabling more applications to access these technologies. This more careful, faster and precise inspection helps manufacturers to introduce 100% inspection on, for example, food items, searching for contaminants, measuring contents and screening for food-borne bacteria.

Smarter Inspection

Image processing is data-intensive by nature. High-resolution imagers, operating at extremely high frame rates today can produce over 16GB/s of continuous data. Applications then require capturing, analyzing and acting on this data. The emergency of artificial intelligence (AI) further pushes the boundaries of processing needs.

The Challenge

Take the example of an AI-based camera for traffic light enforcement.

Typically, these applications use in the range of a 10 Megapixel sensor, operating around 60 frames per second. This delivers a continuous data stream of only 600MB/s of data.

Typical neural network processing today builds on the use of small image frames, on the order of 224x224 pixels in colour = 3*50 Kilo Pixels at 3*1 Byte per pixel (150kB per frame). A modern PC’s CPU may enable an object-recognition neural network to run at 20 frames per second—achieving a data throughput of ~3MB/s. This is a 200x lower data throughput than with the traffic cameras, thus throttling the possible input data stream severely.

Intelligent traffic solutions can combine video and thermal cameras with artificial intelligence, video analytics, radar, and V2X with traffic management and data analytics software to help cities run safely and smoothly. Images via Teledyne FLIR

It’s important to note that the output stream should be seen as information, not just as raw data. Following the example of a 600MB/s image stream, the system performs tracking, reading and processing to obtain a few numbers per scene. We might see a number plate or, in a sorting application, even just “eject: yes or no”, whittling the enormous data stream down to a single bit.

While this is no easy task, if achieved, it will be very attractive for downstream data capture, processing and storage. To solve these input data stream restrictions, we need to combine clever sensor engineering, advanced AI processors, and integrated algorithmic solutions.

Powerful (and Power-hungry) Processors

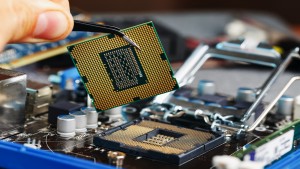

The vast majority of cameras use traditional semiconductors, such as central processing units (CPUs) or field programmable gate arrays (FPGAs). More powerful units may use more powerful FPGAs or graphics processing units (GPUs). So far, these types of processors have been able to follow Moore’s Law, but past performance is no guarantee of the future.

What’s more, GPUs, CPUs and FPGAs consume significant power, and consequently, generate plenty of heat. To some extent, this can be managed with good design, but we’ll need alternative processor and processing – architectures to solve challenges over the long-term.

Quantum computing and integrated photonics/electronics processors are lining up to meet the most demanding performance/power requirements of any image-processing application.

Until those technologies become available and commercially feasible, however, newer processor architectures, such as compute-in-memory or integrated, dedicated acceleration will keep pushing the boundaries of what’s possible.

Principally, manufacturers should consider tera operations per second per watt (TOPS/W) when selecting the right processor for their system. While this is a useful figure of merit for raw power efficiency, we have to remember that the final requirement is actually decisions-per-watt, a metric that doesn’t exist yet.

Clever Processing

On the processing side, driven by giants like Microsoft, Apple and Google, we’re seeing advancements in algorithms, both in speed and capability. AI-based solutions are getting lighter, yet more capable and wide-ranging. Traditional algorithm-based solutions utilize modern processor architectures with greater efficiency. Availability of commercial AI software tools, which may be integrated in existing deployment flows, are leading to lower power and cost, while simultaneously increasing capabilities.

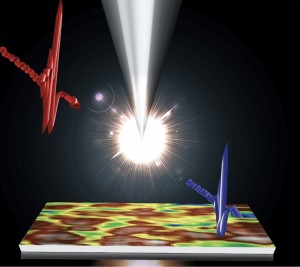

Reduce the data

In conjunction with advanced image sensor technologies, we’re also seeing the advancement of low-data solutions, including event-based sensing implemented spatially, temporally, and even at the photon level, e.g., photon multiplier tube or Electron Multiplying CCD (EMCCD) replacements.

The Evolve camera range includes world-leading EMCCD sensors designed and manufactured by Teledyne e2v to quantum efficiency and low read noise. The sensors are integrated into cameras by Teledyne Photometrics for extremely low-light applications like single molecule imaging and TIRF microscopy.

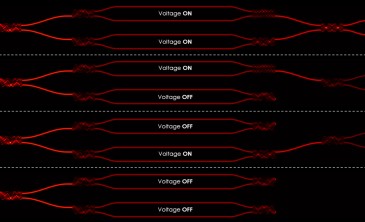

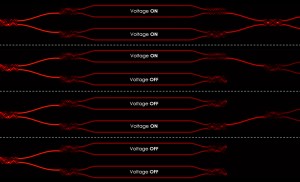

Event-based sensors react to change, filtering irrelevant data directly in the sensor to send only information from pixels that have changed to the processor. This differs from traditional, frame-based sensors, which record and send all pixels for processing, thereby overburdening the system’s pipeline. We often refer to this type of data flow as neuromorphic processing, as its data-processing architecture mimics the ways in which the human brain processes information. While neuromorphic processing can be done in the processor, if we want to achieve optimal data-volume reduction, we need to combine an event-based sensor with a neuromorphic processor.

There are other clever ways to dynamically reduce the data transmission, including smart region of interest (ROI) functions and dynamic data-reduction algorithms, which we’re starting to emerge in higher-end sensors.

Integrating specialized data-capture behavior with high-performance processors and intelligent lightweight algorithms give us the critical combination we need to make decisions right at the edge, where events occur and action is needed. Using this approach, even high-performance, high-information optical inspection systems can operate independently without lengthy, slow and expensive PC connections—allowing them to react to issues faster with 100% monitoring at reduced cost.

In totality, these advancements will improve the safety and quality of diverse products, agricultural produce and goods while they also lower production costs.

Hunting for Molecules

There’s another level of inspection that escapes traditional methods.

Unlike flat-panel makers—which don’t want to detect bacteria on their displays—there are instances where users need to see bacteria at very high resolution.

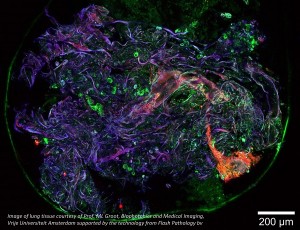

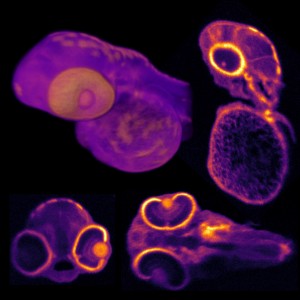

Here we’re using high-magnification microscopy technologies, incorporating methods that are optical, chemical, biological and computational to provide deeper information on the nanostructure of our world.

Image sensors have made it possible to inspect human tissue for cancer cells. While today’s method of detecting cancer in tissue samples is crude, requiring surgical removal of tissue samples—which are then sent to a lab for further study—a new technique, cell cytometry, will one day allow doctors to determine if a sample is cancerous in near real-time, while the patient is still on the operating table. With processing capability moving closer to the pixel, we can now capture the image of a cell, and look at its DNA, in a large lab in a clinical setting. In the future, we’ll move this near-real-time cell inspection from big labs to local labs and finally to the operating table.

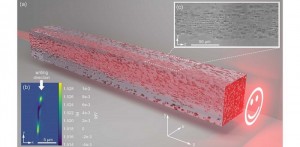

Researchers at the University of Hong Kong developed imaging flow cytometry techniques to reduce the time and cost of blood screenings. Using pulsed laser line-scan imaging with Teledyne SP Devices' digitizer, they were able to process the enormous quantities of resulting data—up to 100,000 single cell images/s and 1 TB of image data in 1-2 minutes—when coupled with deep-learning neural networks and automated “big-data” analysis.

Imagine arming a surgeon with the tools to complete diagnosis while in the middle of an operation. A tumor could be classified and removed in real-time, instead of imposing a stressful waiting period on the patient and compelling the doctor to perform a second surgery. That’s a true improvement in quality of life.

Miniaturization

Size presents another layer of challenge to imaging and vision development. Novel high-capability solutions are generally both expensive and large. Aiming for having a genomics analyzer on a desktop during in-vivo surgery exemplifies the challenge we must overcome if we’re to realize smaller, more accessible solutions for doctors and their patients.

Ultra-small image sensors, light sources and processors for miniaturized camera applications are coming to the rescue. Tiny chip-on-the-tip CMOS image sensors offer surgeons the enhanced vision they need to perform minimally invasive endoscopic and laparoscopic surgeries more efficiently than in the past. Robot-guided surgery similarly benefits from compact image sensors with very small pixel pitch and image quality optimized for specific medical procedures.

“Chip-on-the-tip” CMOS image sensors for disposable and flexible endoscopes and laparoscopes require compact sensors with a very small pixel pitch and image quality specifically optimized to the medical application.

With powerful and effective tools available to doctors, medical procedures will become faster, less invasive and more successful, benefitting patients, doctors and the medical community as a whole.

A Fast-Approaching Future

Advances in camera and image sensing technology will have a major impact not just on the factory floor or in the warehouse or intelligent transportation systems. Deployed in drones or embedded in handheld devices, the next-generation of cameras will use spectroscopy to tell us if there are toxins in our produce or in our drinking water—or if there are environmental toxins in the air we to breathe.

Even polymerase chain reaction (PCR) testing for diseases such as SARS-CoV-2 will be conducted more easily and inexpensively as lower-cost image sensors are used in molecular diagnostic tools. And DNA sequencing, which once required big, expensive machines for sequencing the human genome to determine ancestry, will become increasingly accessible and affordable because of innovations in imaging and analytics.

While we can’t forecast with 100% accuracy, we can make predictions based on what we know of market demands and evolving technology. Expect evermore powerful AI processors that offer greater computational muscle at lower power and cooler temperatures. Combined with powerful algorithmic solutions, solution providers will gain access to a wider range of suitable applications.

Neuromorphic computing platforms will mimic the efficiency of human vision. More intuitive AI software algorithms will train machine vision models more effectively than ever. Hyperspectral imaging will continue to take us to beneath the surface of the Earth. And miniaturized image sensors will enable molecular diagnostics while a patient’s still in surgery.

Cameras have come a million miles since the invention of the first photographic cameras of the early 18th century. We’ll travel millions more as innovations in cameras and image sensors inform our ability to understand the world around—and within—us.

Written by Matthias Sonder, Teledyne DALSA

Back to Features

Back to Features