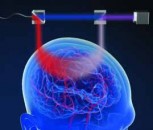

A team of Army researchers uncovered how the human brain processes bright and contrasting light, which they say is a key to improving robotic sensing and enabling autonomous agents to team with humans.

To enable developments in autonomy, a top Army priority, machine sensing must be resilient across changing environments, researchers said.

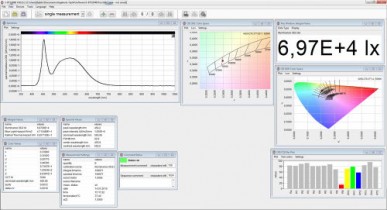

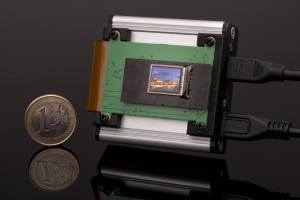

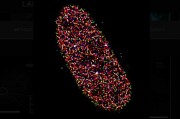

By developing a new system with 100,000-to-1 display capability, the team discovered the brain’s computations, under more real-world conditions, so they could build biological resilience into sensors, Harrison said.

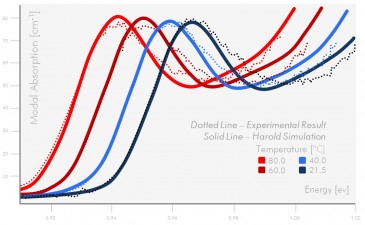

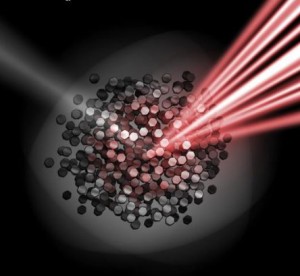

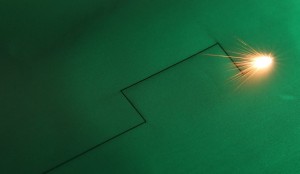

Current vision algorithms are based on human and animal studies with computer monitors, which have a limited range in luminance of about 100-to-1, the ratio between the brightest and darkest pixels. In the real world, that variation could be a ratio of 100,000-to-1, a condition called high dynamic range, or HDR.

The research team sought to understand how the brain automatically takes the 100,000-to-1 input from the real world and compresses it to a narrower range, which enables humans to interpret shape. The team studied early visual processing under HDR, examining how simple features like HDR luminance and edges interact, as a way to uncover the underlying brain mechanisms.

The Journal of Vision published the team’s research findings, Abrupt darkening under high dynamic range (HDR) luminance invokes facilitation for high contrast targets and grouping by luminance similarity.

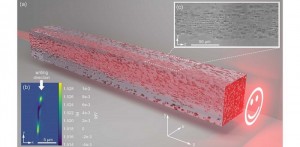

Researchers said the discovery of how light and contrast edges interact in the brain’s visual representation will help improve the effectiveness of algorithms for reconstructing the true 3D world under real-world luminance, by correcting for ambiguities that are unavoidable when estimating 3D shape from 2D information.

In addition to vision for autonomy, this discovery will also be helpful to develop other AI-enabled devices such as radar and remote speech understanding that depend on sensing across wide dynamic ranges.

With their results, the researchers are working with partners in academia to develop computational models, specifically with spiking neurons that may have advantages for both HDR computation and for more power-efficient vision processing--both important considerations for low-powered drones.

Back to News

Back to News