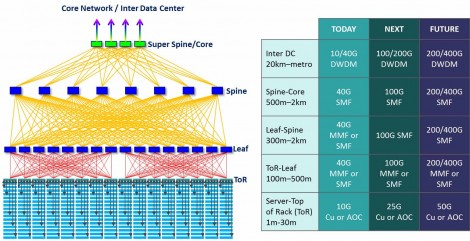

Optical transceivers play a key role in data centers, and their importance will continue to grow as server access and switch-to-switch interconnects require increasingly higher speeds to meet the rising demands for bandwidth driven by streaming video, cloud computing and storage, or application virtualization. Today, mega-scale data centers typically have 10G access ports that interface to 40G switching fabrics, but in the near future, the access ports will increase to 25G and the switching fabrics to 100G. Here, we review the challenges introduced by data center applications on optical modules and describe how the industry is responding to meet the demand.

Challenges in cost per Gbps for optical modules

A single mega data center that houses 100,000s of servers interconnected by a highly redundant horizontal mesh requires a similarly high number of optical links. Because each link must be terminated on both ends by an optical transceiver, the number of transceivers is at least twice the number of optical links and can reach even higher numbers if optical breakout configurations are used. Such high volumes can drive low cost points for optical transceivers, even though these modules operate at the forefront of high data rate. Pricing on the order of $10/Gbps for longer reaches all the way down to $1/Gbps for shorter reaches has been put forward as a challenge to suppliers, which is clearly an ambitious goal given that today’s pricing is 5x to 10x higher, albeit at different data rates or in a different application space.

Cost reductions of this order are difficult to achieve by only making minor refinements of proven approaches to module design and manufacturing. Relaxed specifications, such as lowering the maximum operating temperature, reducing the operating temperature range, shortening the product usage lifetime, and allowing the use of forward error correction (FEC), are examples that can help reduce module cost since it allows module vendors to adopt lower cost designs with higher levels of optical integration, non-hermetic packaging, uncooled operation, or simplified testing.

Transition from 40G to 100G optical modules for the data center

An important factor that determines the applications of optical modules is form factor. Today’s data centers have consolidated around transceivers in the SFP form factor for server access and around QSFP tranceivers for switch-to-switch interconnects. Direct attach copper (DAC) cables are typically used when the distance to the access port is less than 5m, with optical modules or active optical cables (AOC) used for longer reaches. 10G access ports use SFP+ modules, but they will transition to SFP28 when the access speed increases to 25G. Server access does not require reaches beyond 100m, so these modules are typically limited to VCSEL-based transceivers operating over multimode fiber (MMF). However, it is also expected that the ecosystem around 25G lanes will be leveraged in applications such as next-generation enterprise networks which will drive demand for SFP28 modules operating over single mode fiber (SMF) for reaches of 10km to 40km.

Cloud Datacenter network topology and anticipated upgrade path in data rate for server access and switching fabric.

QSFP modules accept 4 electrical input lanes, and operate at 4x the data rate of the corresponding SFP module. Today, 40G QSFP+ is widely deployed in data center switching fabrics. Two somewhat competing schemes exist for the optical interface: parallel single mode fiber (PSM) and course wavelength division multiplexing (CWDM). PSM operates over 8 SMF ribbon cable, where each optical lane occupies a duplex fiber pair. PSM has the potential advantage of a lower module cost because no wavelength multiplexing is required, but cable and connector costs are significantly higher than duplex, resulting in a costlier fiber plant.

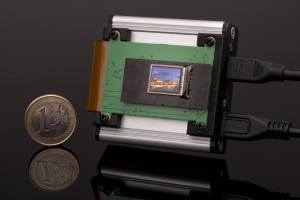

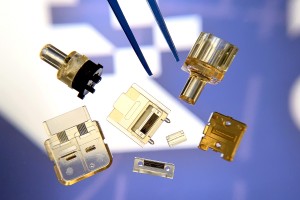

Four generations of 100G pluggable client side transceivers: CFP, CFP2, CFP4, and QSFP28 (left to right).

CWDM operates over duplex SM cabling and uses wavelength division multiplexing to combine 4 lanes in one fiber. Here, the 40GBASE-LR4 Ethernet standard exists as a reference specification for the optical interface. Because the lanes travel in a single fiber strand, CWDM links are compatible with all-optical switching, which can be used for data center traffic management and reconfiguration. A challenge with CWDM modules is that the cost is typically higher than PSM due to the need for additional components such as an optical multiplexer or demultiplexer, but significant costs reductions can be realized by reducing the transmission distance from 10km (LR4) to 2km (MR4 or LR4-Lite).

This illustrates another trend related to data centers, which is that nearly all link lengths are less than 2km. For this reason, the specifications for the next generation of QSFP modules that operate at 100G (QSFP28) have focused on reaches between 500m and 2km over SMF. The CWDM4 and CLR4 MSAs are based on the same wavelength grid as 40GBASE-LR4 but increase the capacity to 100G (4x25G). Alternatively, the PSM4 MSA specifies a 4x25G interface over 500m of PSM cabling. Such QSFP28 modules will be deployed in high volumes as data centers transition from 40G to 100G switching fabrics starting in 2016. In addition, QSFP28-LR4 modules will be necessary for interfacing data center switches to core routers which require Ethernet compliant interfaces (100GBASE-LR4). In this case, similar to 40G, cost-reduced versions that are optimized for 2km are expected to be in high demand.

Optical modules considerations in data centers beyond 100

An important metric for data center switches is front panel bandwidth, which is the aggregate bandwidth of all transceivers that can fit in a 19”-wide and 1RU tall switching hardware. The ability to cool the modules through air flow is one critical constraint, though in many cases the density of electrical connections to the transceiver can become a limiting factor. As a conequence, a common switch can typically accommodate 32 QSFP ports on the front panel. If the ports are QSFP+, the corresponding front panel bandwidth is 1.28Tbps (32 x 40G). With the upgrade to QSFP28, this bandwidth increases to 3.2Tbps.

The upgrade path after QSFP28 is a subject of ongoing discussions. Next generation switching ASICs are expected to have native port speeds of 50G and 128 ports, which correspond to a net throughput of 6.4Tbps. Following the 4x trend set by 40G and 100G, this implies the need for 200G QSFP modules (“QSFP56”). 32 QSFP56 ports on the front panel would result in a front panel bandwidth of 6.4Tbps. The difficulty with this path, however, is that a 200G Ethernet standard does not exist. Discussion on its need has recently begun, but the completion of a standard would be later than 400G Ethernet, which is already in progress.

If 400G ports are assumed, an alternative path to 6.4Tbps front panel bandwidth is to have fewer ports and a larger optical module. A module larger than QSFP is already anticipated for the first generation 400G modules, as the module must accommodate either 16 x 25G or 8 x 50G electrical input lanes, which exceeds the 4 lanes defined for the QSFP. Furthermore, meeting the 3.5W power limit of QSFP modules appears infeasible for some 400G implementations. The 2km duplex single mode fiber standard 400GBASE-FR8 is specified with 8 multiplexed wavelengths modulated by 50G PAM4. This is twice the number of optical lanes of a QSFP28-CWDM4 module, which is already close to the 3.5W limit. Proposals for larger form factors for 400G can be anticipated from groups such as the CFP MSA, which has had large success in 100G with CFP, CFP2, and CFP4. A key requirement in that case will be that the size allows for at least 16 ports on the front panel (16 x 400G = 6.4T, and possibly more).

If maintaining the QSFP size is important, the only suitable 400G standard currently under development is 400GBASE-DR4, which specifies 4 optical 100G PAM4 channels operating over 500m of PSM4 cable. In addition, 4-wavelength 100G PAM4 implementations over duplex SM fiber are expected to be defined in the future. Based on what has been demonstrated in QSFP28-CWDM4 modules, the need for only 4 wavelengths increases the likelihood that the power limits of QSFP can be met. However, unless a 4 x 100G electrical interface also becomes available (“CDAUI-4”), the number of electrical input lanes must still be increased to at least 8. This requires a new module definition, and various solutions are currently being considered. Among them is moving beyond the pluggable modules to a new paradigm based on on-board-optics (OBO). OBO modules have the advantage of moving the optics closer to the ASIC, which can help increase signal integrity and lower power by eliminating retimers. The Consortium for On-Board Optics (COBO) was recently formed to accelerate the development of such solutions and has the support of at least one large data center provider. Other solutions are also on the table, such as those proposed by the microQSFP MSA, which aims to realize the function of a QSFP in a size similar to an SFP, resulting in a front panel bandwidth of 7.2Tbps.

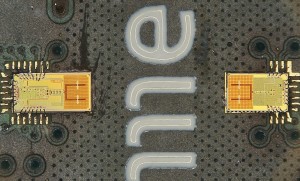

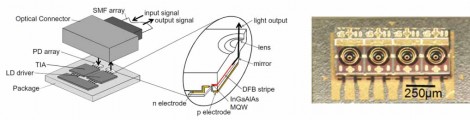

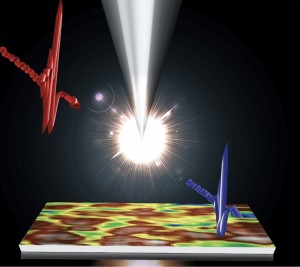

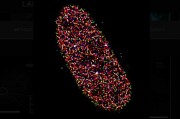

Example of a 4x25G Lens-Integrated Surface Emitting Laser (LISEL) Array for 100G On-Board Optics.

Key elements of data centers

Optical modules are key to building the switching fabrics of mega-scale data centers. The transition from 40G to 100G is imminent, and several possible paths exist for the next stage of evolution, which will likely be based around 200G or 400G interconnects. New optical module concepts will be necessary, and optical module vendors must work closely with networking equipment manufacturers and data center operators to ensure the development of solutions that meet future data center requirements of cost and power per gigabit.

Written by Kohichi Tamura, Director in Oclaro Japan’s Engineering and Marketing Department

Back to Features

Back to Features